But also ...

.. who gets to decide what is and isn't Writing?

In my last post, Don’t Call it Writing, I came out swinging.

I was triggered by a recent Guardian piece in which Cornell Tech academics in New York asked the question: should writers using A.I to enhance their work now be allowed to call the work their own? If you missed it, my answer was a firm NO.

Why was I so triggered? What nerve did their question brush up against? Was my strident response warranted? And was it the last word I want to say on the subject?

I don’t like to consider myself a rigid thinker, so when an A.I.-assisted writer here in the Substack ecosystem called me out on some aspects of my position, I reflected on what I’d said, and on what he said, and I have continued mulling over where I want to land in the discussion of A.I. involvement in the creative process.

In general, I don’t like to land anywhere permanent when it comes to issues in an ever-changing landscape. I prefer to acknowledge that I don’t and can’t know everything, and to recognise shifting contexts, nuances and shades of grey. So it’s quite unusual for me to step into the fray. But I did it, and I stand by most of what I said.

Essentially, my arguments were these:

If a writer’s work is AI-assisted in any way, that should be disclosed.

Authors whose work has been scraped to build the LLMs currently being used to support or enhance the process of A.I.-assisted writers should be recognised and compensated for their contributions.

The ‘writing’ process of A.I.-assisted works should go by a different verb … i.e. not writing.

Most responses from fellow writers were supportive, but clearly my critic was not, and certainly some of his points were valid. He found my assertions contemptuous of writers embracing A.I. tools, and he questioned my right to draw boundaries around which verbs are used for what. And actually, that’s fair enough. I triggered him, because I called his process illegitimate.

I’d like to argue that I was not pointing the finger at experimental writers so much as challenging those A.I.-invested stakeholders who are trying to wash away all the boundaries in this discussion. But I can see how creators who are embracing the tools may have felt attacked.

So now I have even more questions than before. Including, was my thinking too rigid? Was I lashing out from a sense of injustice, because I know how long it takes and how difficult it is to create something 100% human-authored, and it’s not fair if the playing field becomes even more hostile to those who refuse to adopt the new rules and tools. If so, is a ‘not-fair’ stance just petulant? I’d love to know what you think.

I still maintain points one and two: disclosure is vital, and authors whose work has been mined in the building of profit-driven LLMs deserve financial and conceptual recognition. And I still think questions over copyright and royalty-share need to remain on the table. I also believe using LLMs to generate rough drafts, structural edits and sentence-whispering guidance sends the finished product into a different creative category, separate from purely human-authored writing. And I think we should hold that line.

But I agree that I don’t get to define verbs and decide who is or isn’t writing.

Also, the ‘brainstorming and idea-sparking’ part of the discussion is a sticking point, because the question of where ideas come from in the first place is complex, particularly now, in our story-and-information-saturated age.

A question of Synthesis

Is it possible to tease out questions of legitimacy in this space?

When we learn our craft as writers, or creators in any medium, it’s fair to say we draw on the works that have come before us. We synthesise them, and the world around us, using our own brains and the guidance and observations of those who teach us. I’m not a neuroscientist, but I think this is a given: no writer or artist exists or develops within a vacuum.

You could say that everything we ever come across falls into the category of the lived experiences that inform our writing, including but not limited to: the books and films we love and don’t love … the conversations we have or overhear … the music we listen to … the places we visit … the global and local injustices, triumphs and tragedies we witness or read about … our joys and griefs … the words, sights, smells and encounters we mull over. Importantly, the ‘mulling over’ is the writer’s own process, and it is purely human, because it happens inside the human brain.

So, everything we ever come across feeds into our thoughts and interests, thereby informing the work we produce. The exact combination of these things, and a writer’s unique take on them, is what makes the work original, and our own.

But what if the thing the writer is mulling over (inside their human brain) is an A.I. generated synthesis of all the written material available on the internet? Say, an ‘A.I. summary’ on Google, or an idea1 generated by an LLM on the basis of a prompt? The question is, in this context, is the ‘mulling over’ and subsequent human-written output still entirely that writer’s own work? Or has it become something else? This is where I need to take a more humble approach, because the answer is, I don’t know, and maybe it’s not up to me.

A few more thought experiments:

If everything I ever come across is considered legitimate source material for my creative process, but I want to be a 100%-human author, must I exclude A.I.-synthesised material from everything I ever come across? Is that even possible now, with A.I. snaking into every aspect of our engagement with technology, every computerised tool we touch, every piece of entertainment we consume? (I resist it, I don’t like it, but it is there.)

I’ve never used LLMs to brainstorm ideas or suggest turning points in my work, and I never will. But even with that boundary in place, can I really claim that all of the inputs my human brain digests and draws on are ‘purely’ human?

Furthermore, if purely human is morally superior in this situation, and we only consider purely human creativity to have any integrity, is that even something we can control anymore? If I’m to honour my 100%-human-authored guarantee, do I need to draw boundaries around the kinds of stories and information I myself consume, and which feed into my perspective and and inform my writing?

It’s impossible to have all the answers. But I think these questions need to be teased out and resolved collectively, somehow, and enshrined in ways that protect what we cherish.

Questions for a Global Round-Table Discussion around A.I in the field of Creative Writing:

(Who’s organising the round-table? Let me know!)

How much A.I. involvement undermines or dilutes human authorship? Who gets to decide, and how can those boundaries be drawn and maintained?

How do we address the copyright and royalty-share issues in relation to the authors’ work that has been used to train the LLMs?

How do we quantify those authors’ shares of the profits the A.I. companies are making?

What about the toll of sprawling data centres on our already struggling planet?

Coming in to land:

I want to know if what I’m reading has had input from A.I., and I want readers to know that my work is my own. Because I would never be okay with readers wondering how much of my work has been generated or sparked or shaped by A.I..

I prefer to take an A.I.-free path, to the extent that I have any control over what my brain synthesises. And so I don’t use LLMs. I don’t generate anything at all using A.I., I don’t bounce ideas off Chat GPT or Claude (I don’t even know how), and I want readers to know that.

But am I in a position to define verbs and say who is or isn’t a writer? Of course not. And is it fair of me to judge how other writers approach their process? Also, no; I don’t want to be that person. I don’t have to respect all their choices, but I do have to respect their right to make them.

And while I’m not walking back my human-authored guarantee, I have to concede that I live in a world in which it’s increasingly difficult to either say what exactly that means or extricate my own lived experiences from the wider influence of artificial intelligence. Unless I move into a padded cell (not out of the question), or go off-grid (which is what my current novel-in-progress explores), I can’t fully control which inputs my brain is synthesising when writing creatively. Subconsciously and consciously, the writing brain draws on everything it encounters.

So, I won’t be working with LLMs, but I have no control over where this issue is going, or what it even means to have a 100%-human perspective … and I guess I have to be okay with that.

What about you? Where do you land on this issue? I’d love to know.

Bye for now,

Thanks for reading ‘Joanna Morrison’s Newsletter’! If you haven’t already, you can subscribe free of charge to receive new posts and support my work.

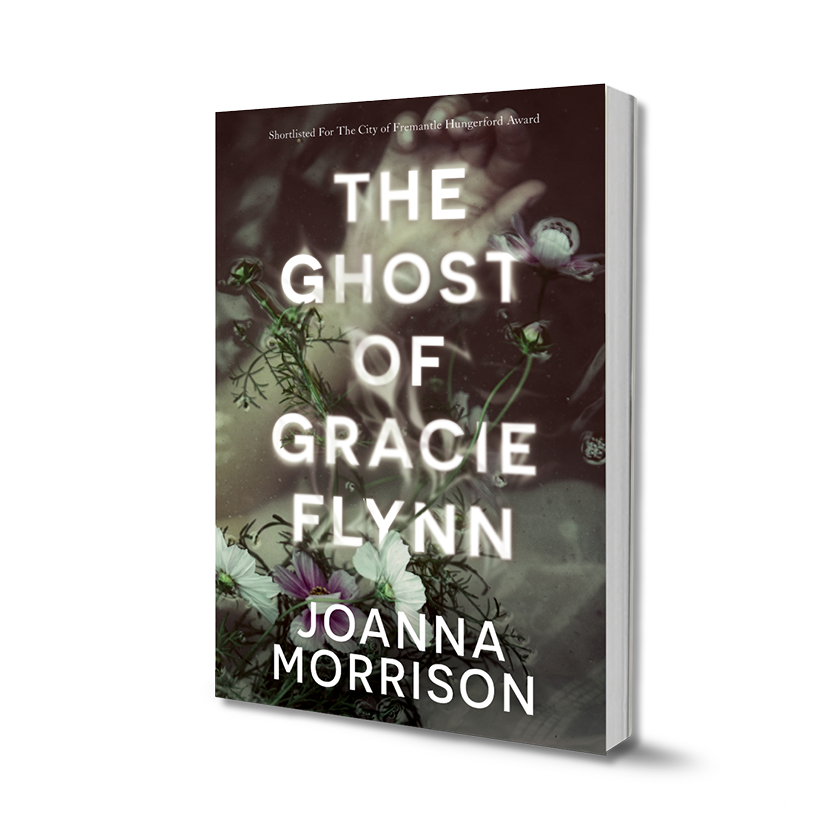

I’m Joanna Morrison, a writer based in Western Australia. My first novel The Ghost of Gracie Flynn (Fremantle Press, 2022) was shortlisted for the Hungerford Award in 2020 while my manuscript ‘Artistry’ was shortlisted for the Australian Fiction Prize in 2024. Visit my Linktree for links to my website, Instagram, media interviews, reviews, and ways to buy my book.

What constitutes an idea itself deserves further dissection … are we talking about a small conceptual seed, or an overarching plot, theme or character arc? These distinctions matter, but that’s a further topic for a different essay.

Great response Jo. I have to say, conflating those who question AI's place in creative writing with racism/apartheid AND calling them zealots for daring to say that maybe outsourcing your thinking to a large language model is not going to produce great work is quite the reach, but if we're going there, we could also argue that AI is a coloniser, stealing the work of writers for its own benefit and consuming huge volumes of precious water in the process.

I felt the idea of too rigid a division between wrong/right when it comes to AI-assisted writing being comparable to racism was a bit of a stretch. For one thing, there are some serious 'wrongs' on which LLMs were built and continue to be developed. I'm not completely anti-AI. I use some forms of AI in my admin and editing processes. But I'm anti 'faking it' and I'm (I think justifiably) defensive about my career as a writer. It took about 35 years for me to get here and yes, I'm scared my hard work will all have been for nothing if we can't get readers to agree that human-authored work is more valuable than machine-authored work. And I'm also scared about how fast it's all happening and how little discussion, regulation and pause are happening before it becomes completely mainstreamed. Lastly, I'm concerned that the speed of change is deeply tangled up with the rich getting richer. These questions aren't just abstract. There are tech billionaires pushing this uncritical acceptance of gen-AI and once again, the climate and planet are the losers. And creatives are also losing - which is nothing new.